The monitoring of on-farm conservation practices, such as winter cover crops and conservation tillage, is fundamental to advancing sustainable agriculture

and protecting environmental resources like the Chesapeake Bay. However, the performance of these practices is highly variable, making landscape-scale

assessment a significant challenge. This briefing document synthesizes research on the application of remote sensing and data science to measure and

map these agricultural practices with greater accuracy and efficiency.

The central finding across multiple studies is that integrating different

types of satellite data—specifically optical imagery (from sensors like

Landsat and Sentinel-2) and Synthetic Aperture Radar (SAR, from

Sentinel-1)—consistently yields more accurate results than using either data

type alone. This fusion of technologies helps overcome the limitations of

individual sensors, such as cloud cover for optical data and complex signal

interactions for SAR.

Despite these advancements, key challenges persist. A primary limitation is

the "saturation" of common optical vegetation indices at high biomass levels,

which can lead to underestimation of the most successful cover crops. While

integrating SAR data and using advanced optical bands (e.g., red-edge) can

mitigate this issue, it remains an area for future research involving machine

learning and process-based models. Furthermore, all remote sensing analysis is

critically dependent on robust, in-field "ground-truth" data for calibration

and validation. Access to detailed agronomic management data and consistent

field sampling are indispensable for developing reliable models to translate

satellite signals into meaningful conservation performance metrics. These

advanced monitoring techniques are a cornerstone of the broader field of

Precision Agriculture, which leverages data to enhance productivity and

environmental stewardship.

1. The Challenge: Quantifying Variable Conservation Performance

The effectiveness of agricultural conservation practices varies significantly

across fields, farms, and seasons due to differences in management, soil

properties, and climate. This variability complicates efforts to quantify

their environmental benefits, such as reducing the transport of pollutants

like nitrates to waterbodies.

- Cover Crop Variability: Cereal rye, the most common winter cover crop in the United States, demonstrates this performance gap. A comprehensive database covering 208 site-years across the eastern U.S. found that while the mean biomass was 3,428 kg/ha, the data had a standard deviation of 3,163 kg/ha, indicating a wide range of outcomes.

Environmental Impact: The presence, type, and quality of winter vegetation cover directly influence water quality. Bare soils can act as an accelerator for pollutant transport, whereas healthy cover crops serve as an obstacle.

Accurate, large-scale monitoring is therefore essential for evaluating

conservation programs, targeting resources, and developing effective

management and policy decisions.

2. The Solution: A Multi-Sensor Remote Sensing Approach

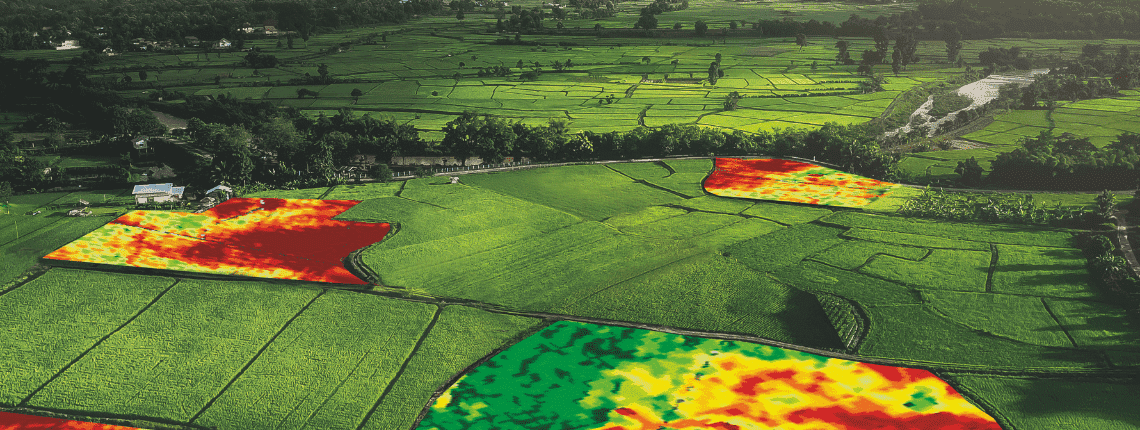

Precision agriculture leverages data science and technology to optimize

farming, with remote sensing serving as a key enabling technology. By

capturing how different surfaces interact with light and microwave energy,

satellites can differentiate between green vegetation (cover crops), crop

residue (conservation tillage), and bare soil. The most effective

methodologies combine data from two primary satellite sensor types.

| Sensor Type | Description | Key Satellites/Sensors | Strengths | Limitations |

|---|---|---|---|---|

| Optical | Passively measures reflected sunlight across various spectral bands. Vegetation indices like the Normalized Difference Vegetation Index (NDVI) and Soil Adjusted Vegetation Index (SAVI) are calculated from these bands. | Landsat, Sentinel-2, SPOT, WorldView-3 | Well-established methods for vegetation analysis; high spectral resolution. | Obscured by clouds, haze, and darkness; indices can saturate. |

| SAR | Synthetic Aperture Radar actively transmits microwave signals and measures the reflected energy (backscatter). It can operate in different frequencies (e.g., X, C, L-band) and polarizations. | Sentinel-1, RADARSAT-2, ALOS PALSAR, TerraSAR-X | Penetrates clouds and operates day or night; sensitive to vegetation structure, surface roughness, and moisture. | Signal is complex and can be affected by multiple factors simultaneously (e.g., moisture, geometry). |

A study conducted in an agricultural region of France demonstrated the power

of this integrated approach for identifying winter land use types (winter

crops, catch crops, crop residue, bare soil, grasslands). The findings were:

- Combined Sentinel-1 (SAR) and Sentinel-2 (Optical): Most accurate, with an Overall Accuracy (OA) of 81%.

- Sentinel-2 Only: More accurate than SAR alone, with an OA of 75%.

- Sentinel-1 Only: Least accurate of the three, with an OA of 70%.

3. Methodologies and Findings for Cover Crop Assessment

3.1. Combining Optical and SAR Data

The synergy between optical and SAR data is a recurring theme in advanced cover crop analysis.

-

Improved Biomass Estimation: A study in Maryland found that combining a

red-edge optical index from Sentinel-2 (NDVI_RE1) with SAR interferometric

(InSAR) coherence from Sentinel-1 provided the best estimates for the

biomass of cereal grass cover crops. This fusion improved biomass

predictions by 4% in wheat, 5% in triticale, and 11% in cereal rye compared

to using optical data alone.

-

Species-Specific Performance: The accuracy of these models is often

dependent on the specific crop species, likely due to differences in plant

structure and leaf angle. The integrated model achieved high R² values for

wheat (0.74) and triticale (0.81) but was less effective for cereal rye

(0.34).

3.2. Tracking Phenology and Performance

Time-series analysis using frequent satellite imagery, such as the Harmonized Landsat and Sentinel (HLS) dataset with a potential 4-day repeat frequency, allows for detailed tracking of a cover crop's life cycle (phenology). By plotting vegetation indices over time, analysts can identify key performance metrics:

- Fall green-up date and momentum

- Maximum wintertime and springtime greenness (NDVI)

- Spring termination (eradication) date

These remotely sensed metrics, when calibrated with field data, can be

translated into crucial performance indicators like aboveground

biomass and percent green ground cover.

3.3. Overcoming the Saturation Problem

A significant limitation of traditional optical indices like NDVI is saturation. The index value stops increasing at higher levels of vegetation density, making it difficult to differentiate between good and excellent cover crop performance.

- Saturation Point: Research shows that NDVI tends to saturate at biomass levels around 1500-1900 kg/ha or approximately 80% ground cover.

- Mitigation Strategies:

◦ Red-Edge Bands: VIs that incorporate the red-edge portion of the spectrum (available on sensors like Sentinel-2) are more robust to saturation issues than standard red-band indices.

◦ SAR Integration: As SAR is sensitive to vegetation structure and volume, its integration can help improve estimates in high-biomass scenarios.

◦ Future Research: Despite these improvements, saturation remains a challenge.

Future work aims to address this by incorporating weather variables, machine

learning models, and process-based crop-soil simulation models.

3.4. SAR Frequency and InSAR Applications

The effectiveness of SAR is highly dependent on its configuration.

- Frequency Sensitivity: Longer wavelengths (like L-band, ~20 cm) penetrate deeper into the vegetation canopy and are more sensitive to the entire plant structure (e.g., Leaf Area Index, LAI). A study on corn found L-band backscatter had the highest correlation with LAI (r = 0.90-0.96). Shorter wavelengths (like X-band, ~3 cm) interact mainly with the top of the canopy and were poorly correlated with LAI.

-

Interferometric SAR (InSAR): This advanced technique measures the phase

difference between two SAR images taken at different times to detect

millimeter-scale surface motion. In agriculture, this is invaluable for

monitoring land subsidence caused by groundwater extraction or tracking the

impacts of soil compaction.

4. Methodologies and Findings for Crop Residue Assessment

Remote sensing can also be used to map crop residue, a key indicator of conservation tillage.

- Spectral Detection: The most effective method involves measuring the unique absorption feature of cellulose and lignin using shortwave infrared (SWIR) bands, particularly near 2100 nm. This requires specialized sensors like those on the WorldView-3 satellite. High accuracy (R² = 0.94) has been achieved in mapping residue on fields with low green vegetation (NDVI < 0.3).

- Alternative Indices: For more common satellites like Landsat and Sentinel, the Normalized Difference Tillage Index (NDTI), which uses two different SWIR bands, can be used.

-

Key Challenge: Moisture: Surface moisture is a major source of interference,

as wet soil can be confused with residue. However, this effect can be

corrected by using a satellite-derived water index to adjust the residue

calculations, greatly improving accuracy in wet or irrigated areas.

5. The Critical Role of Field Data

Remote sensing models are not viable without extensive in-field data

collection for calibration and validation. This "ground-truthing" ensures that

satellite-derived indices are accurately converted into meaningful

agricultural metrics.

5.1. Essential Data Types

| Data Category | Description and Collection Method | ImportanceI |

|---|---|---|

| Physical Samples | Physical collection of plants within a defined area (quadrat) to measure biomass (weight), nitrogen and carbon content, and growth stage. | Provides the direct measurements needed to calibrate satellite indices to biophysical properties. |

| Ground Cover Photos | In-field nadir (top-down) photographs taken from a consistent height (e.g., 4 meters). Processed using software ("Green Fraction" python code or "SamplePoint") to calculate the percentage of green cover or residue cover. | Considered the "best tool" for calibrating both cover crop and residue models. It is faster than manual methods like line-point transects while providing highly correlated results (R²=0.97 for residue). |

| Agronomic Data | Management records, often available through government cost-share enrollment programs (e.g., Maryland Department of Agriculture). Includes field boundaries, crop species, planting/termination dates, planting methods, and previous crops. | "Extremely useful" for validating phenology models (e.g., confirming termination dates) and for analyzing how management practices affect remote sensing outcomes. |

| Climate Data | Local weather information, particularly Growing Degree Days (GDD). | Necessary to account for the significant effect of winter climate variation on cover crop growth and remote sensing signals. |

5.2. Data Collection Best Practices & Challenges

- Spatial Variability: In-field variability is significant. Sampling protocols must account for this by taking multiple samples (e.g., 3 quadrats and >10 photos per field) spaced far enough apart (e.g., 60m) to fall in different satellite pixels.

- Avoiding Errors: Samples should avoid field edges and irregular areas. Mismatches between the date of field sampling and the date of the satellite overpass are a known source of error.

- Survey Limitations: Roadside surveys have proven problematic for estimating residue cover, as view angle and conditions at the field edge can skew observations, particularly in distinguishing between medium (30-60%) and high (>60%) cover classes.