Why "Tune" a Model of a River?

Imagine trying to play a guitar that's out of tune. You might play the right notes in the right order, but the sound won't match the song. To fix it, you must adjust the tuning pegs until each string produces the correct pitch. Similarly, imagine baking a cake from a new recipe. You might follow the steps perfectly, but the result might be too sweet. The next time, you'll adjust the amount of sugar to better match the taste you want.

A hydrologic model is a computer simulation of a watershed - an area of land that drains all its water into a single river or lake. It simulates complex processes like rainfall, infiltration into the soil, and runoff into streams. Calibration is the process of "tuning" this model. We adjust its internal settings, called parameters, so that its simulated output (like river flow) matches real-world, observed data as closely as possible.

However, the goal of modelling isn't to create a perfect replica of reality, which is impossible. Instead, we follow a guiding principle:

Calibration is not about making a "perfect" model, but about making a useful one. It's a process of understanding the model's limitations and intelligently quantifying its uncertainties. By doing so, we can build a more reliable tool for forecasting floods, managing water resources, and understanding the impact of environmental changes. This guide will walk you through the standard four-step process for calibrating a hydrologic model.

1. The Four-Step Calibration Workflow

Calibrating a model is a structured, iterative process. Here is a high-level overview of the workflow we will explore in detail.- Preliminary Analysis: Identifying the model's most influential parameters.

- Setting the Goal: Choosing a score (an objective function) to define what a "good" model fit look like.

- Running the Calibration: Using automated tools to find the best parameter ranges.

- Validation: Testing if the calibrated model works on new data it hasn't seen before.

Step 1: Preliminary Analysis - Identifying What Matters

Hydrologic models can have dozens of parameters representing physical characteristics like soil conductivity, slope length, or vegetation cover. Adjusting every single one is inefficient and impractical. The first and most critical step is to figure out which parameters have the biggest impact on the output we care about, such as soil erosion or river discharge.

The "What" and "Why" of Sensitivity Analysis

Sensitivity Analysis (SA) is the process of determining how a model's output responds to changes in its input parameters. The primary purpose of SA is to help us focus our calibration efforts on the parameters that contribute the most to the model's output and its uncertainty. This allows us to simplify a complex problem by concentrating on what truly matters.

There are two main approaches to Sensitivity Analysis:

- Local SA ("One-Factor-at-a-Time"): This is the simplest method. You change one parameter by a small amount while keeping all other parameters fixed at a specific value. While easy to understand, this method has a major limitation: it can be misleading for complex models where parameters interact with each other in non-linear ways.

- Global SA: This is a more robust and widely used method. Instead of changing one parameter at a time, it explores the entire possible range for all parameters simultaneously. This approach is much better at capturing the overall effect of a parameter, including how its influence changes depending on the values of other parameters (its interactions) and its non-linear effects.

The Outcome: A Ranked List of Important Parameters

A successful sensitivity analysis provides a ranked list of the model's parameters, showing which ones are most influential. Based on a study of the Rangeland Hydrology and Erosion Model (RHEM), these parameters can be classified into three distinct groups.

- Group 1: The Critical Few. These are parameters with the highest overall effect on the output and the highest interaction with other parameters. For example, in the RHEM study, total rainfall was found to be in this group. These parameters become the primary focus of the calibration process.

- Group 2: The Important Middle. These parameters have a median effect on the output. They are still significant and are typically included in calibration, but they are less dominant than the critical few.

- Group 3: The Less Influential. These parameters have the least impact on the model's output. In many cases, these can be left at their default values without significantly affecting the model's performance, saving valuable time and computational resources.

After identifying our key parameters, we need a clear and consistent way to measure how well our model performs as we start "tuning" them. This brings us to the next step: defining a mathematical goal.

Step 2: Setting the Goal - Choosing an Objective Function

To have a computer automatically "tune" a model, we can't just tell it to "make the graph look good." We need a precise, mathematical goal for it to optimize. This goal is called an objective function. A critical point to understand is that the choice of an objective function is subjective; using different objective functions will produce different calibration results because each one prioritizes a different aspect of the model's performance.

What is an Objective Function?

An objective function is a formula that calculates a single score reflecting the difference between the model's simulated values and the real-world observed values. The calibration algorithm's job is to systematically adjust the model's parameters to either maximize or minimize this score, depending on the function.

Common Objective Functions and What They Prioritize

There are many different objective functions, each designed to focus on a specific aspect of the model's fit. Here is a comparison of three popular choices used in hydrologic modeling.

| Objective Function | Goal | What It Prioritizes |

|---|---|---|

| NS (Nash-Sutcliffe Efficiency) | Maximize | The overall shape and timing of the hydrograph. It heavily penalizes errors in peak flow timing and magnitude, making it a good general-purpose choice for matching the river's dynamic behavior. |

| KGE (Kling-Gupta Efficiency) | Maximize | A more holistic fit, balancing the correlation (timing), bias (average flow), and variability of the flow. |

| PBIAS (Percent Bias) | Minimize | The overall volume of water. It tells you if the model consistently overestimates or underestimates the total flow. |

With a clear goal defined by our objective function, we are now ready to let the computer begin the search for the best set of parameters to achieve it.

Step 3: Running the Calibration - Finding the Best Parameter Ranges

Once we have our key parameters from Step 1 and our objective function from Step 2, we can start the automated calibration process. This step uses a smart search algorithm to explore different parameter combinations and find the ones that produce the best score on our objective function.

From Single Values to Uncertainty Bands

Modern calibration has shifted away from finding a single "best" value for each parameter. This is because we can never know the true value of a physical parameter (like soil conductivity) for an entire watershed with perfect accuracy. This approach, known as stochastic modeling, acknowledges this inherent uncertainty.

Instead of a single value, the goal is to find a range of likely or plausible values for each key parameter. This gives us a more honest and realistic understanding of the model's potential behavior. Two key concepts are central to this process:

- Latin Hypercube Sampling: To find the best parameter ranges, the algorithm needs to run the model many times with different parameter values. Latin Hypercube is an efficient sampling method that divides each parameter's range into equal intervals and samples just one value from each. This method prevents the algorithm from repeatedly testing clustered values (e.g., only high values for one parameter and low for another) and guarantees that the full spectrum of possibilities for each parameter is explored systematically.

- The 95PPU (95% Prediction Uncertainty) Band: This is the primary result of the calibration process. After running the model hundreds or thousands of times using the likely parameter ranges found by the algorithm, we get a distribution of possible outputs. The 95PPU is the band that contains 95% of these simulation outputs. The ultimate goal is to find a 95PPU band that brackets most of the observed real-world data while being as narrow as possible, balancing accuracy and certainty.

Finding a good set of parameter ranges that performs well on our training data is a huge step. However, it's not the end of the process. We must now test if our "tuned" model can work on data it has never seen before.

Step 4: Validation - Does the Model Actually Work?

A common pitfall in modeling is "overfitting," where the model becomes so finely tuned to the calibration data that it loses its ability to make general predictions. It's like a student who memorizes the answers to a practice exam but can't solve new problems. Validation is the crucial final step to check the model's performance on an independent set of data that was not used during calibration.

How to Validate Your Model

The validation process is a straightforward test of the calibrated model's robustness.

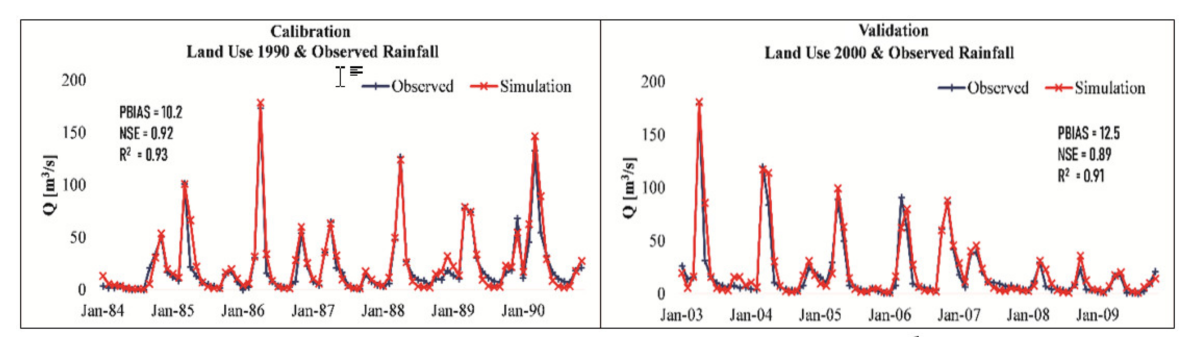

- Split the Data: Before calibration begins, divide all available observation data into two independent sets: one for calibration and one for validation. Critically, ensure both datasets share similar statistical properties (like mean and variance) to provide a fair test of the model's performance.

Calibrate the Model: Perform Steps 1-3 (Sensitivity Analysis, Objective Function selection, and Automated Calibration) using only the calibration dataset. This process generates the final, "tuned" parameter ranges. - Test the Model: Run the now-calibrated model with its final parameter ranges, but this time, use input data (like rainfall) from the validation time period.

- Evaluate Performance: Compare the model's simulated output (the 95PPU band) against the observed data from the validation dataset. If the model still brackets the observations well and the objective function score is still good, it gives us confidence that the model is robust and useful for making predictions about the future.

Embracing Uncertainty for Better Predictions

The calibration of a hydrologic model is an iterative four-step process: identifying the most important parameters through preliminary analysis, defining success with an objective function, finding the best parameter ranges through automated calibration, and confirming performance through validation.

The modern approach to this process has fundamentally shifted. The goal is no longer to find one "right answer" or a single "best-fit" simulation. Instead, the focus is on intelligently quantifying and managing uncertainty. By treating parameters as ranges rather than single values, we produce a "95% Prediction Uncertainty" band that provides a more honest and reliable picture of what the watershed might do. This embrace of uncertainty is what transforms a model from a simple, wrong representation into a truly useful tool for science and decision-making.